Display Objects

Display Objects

All objects on display reflect the history of computing at CERN, across CPU/processing, networking and storage.

| Object | Description | Era | ||

|---|---|---|---|---|

|

140Mb 9-track tape | With arrival of CDC 6600 at CERN in January 1965, there came the first half-inch wide 7-tracks tape units. |

||

|

CDC 6600 Magnetic Core Memory | A plane of magnetic core memory with 64x64 bits (4Kb) as used in a CDC 6600. The very first CDC 6600 |

||

|

10 MB disk platter from CDC 7638 | This magnetic disk was one of three which interfaced with various Control Data machines. This single |

||

|

IBM 3851 Mass Storage Cartridges | IBM 3850 MSS mass storage system (simply known as MSS) was first announced by IBM in late 1974 with data cartridges in form of circular cylinders able to store 50 megabytes of data. |

||

|

CERNET Interface Card | Homegrown networking technology pre-dating the internet. This is a CERNnet card developed and built |

||

|

10BASE5 Ethernet Cable & Vampire Tap | 10BASE5 Thick Ethernet Cable, 10Mbit/sec. In the 1980s and early 1990's, Ethernet became more |

||

|

StorageTek T10000 Tape Cartridge | Oracle StorageTek T10000T2 cartridge has total capacity of 5 TB. It is actually manufactured by Fuji |

||

|

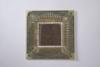

IBM 3090 CPU chips | The most powerful IBM computer system of its time, the IBM 3090 high-end processor of the IBM 308X computer series incorporated one-million-bit memory chips. |

||

|

NExT server | The first website at CERN - and in the world - was dedicated to the World Wide Web project itself and was hosted on Berners-Lee's NeXT computer. |

||

|

IBM 3390 Hard Disk Platter | The 3390 disks rotated faster than those in the previous model 3380. Faster disk rotation reduced |

||

| |

Brocade router | A modern 2.8TB/s router, the backbone of our internet connectivity. This model was in service at |

||

|

Optical Fibre Bundle | These are sample fibre optic cables which are used for networking. Optical fibers are widely used |

||

|

Disk Storage Server | This model was a disk storage server used in the Data Centre up until 2012. Each tray contains a |

||

|

CPU Server | The CERN computer centre has hundreds of racks lke these. They are over a million times more |

||

|

2TB hard disk drive | This particular object was used up until 2012 in the Data Centre. It slots into one of the Disk |